Introduction

Real-time electronic distributed control systems are an important development of the technological evolution. Electronics are employed to control and monitor the most safety-critical applications from flight decks to hospital operating rooms. As these real-time systems become increasingly prevalent and advanced, so does the demand to physically distribute the control in strict real-time. Thus there is a need for control network protocols that can support the application's stringent real-time requirements. Real-time networks must provide a guarantee of service so that they will consistently operate deterministically and correctly.

Ethernet, as defined in IEEE 802.3, is non-deterministic and thus it is unsuitable for hard real-time applications. The media access control protocol, CSMA/CD with its truncated binary exponential backoff algorithm, does not allow the network to support hard real-time communication as it incorporates random delays and allows for the possibility of transmission failure.

Decreasing costs and increasing demand for a single network type, from boardroom to plant-floor, have led to the development of Industrial Ethernet. The desire to incorporate a real-time element into this increasingly popular single-network solution has led to the development of different real-time Industrial Ethernet solutions. Fieldbus networking standards have failed to deliver an integrated solution. Typically the emerging real-time Industrial Ethernet solutions complement the fieldbus standards, for example by using common user layers. This course covers an introduction to real-time systems along with a study of Ethernet with emphasis on its suitability as a real-time network. Module 305 provides a study of the real-time Industrial Ethernet solutions available today.

Real-Time Introduction

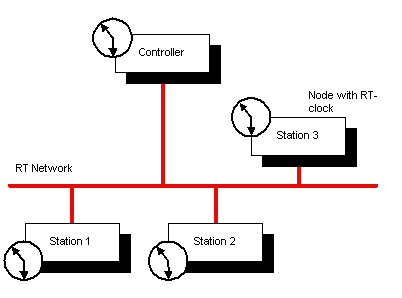

Real-Time (RT) systems are becoming increasingly important, as industries focus on distributed computing in automation, see Figure 1. As computing costs decrease, and computing power increases, industry has become more dependent on distributed computers to deliver efficiency and increased yield to the production lines. Real-Time does not automatically mean faster execution but rather that a process is dependent on the progression of time for valid execution.

Figure 1 — Distributed Real-Time Processing.

RT systems are those whose correct execution depends not solely on the logical validity of data but also on its timeliness. A correct RT system will guarantee the successful operation of a system — so far as its timely execution is concerned. RT systems are generally broken into two main sub-categories: hard and soft.

Hard Real-Time (HRT) systems are those in which incorrect operation can lead to catastrophic events. Errors in HRT systems can cause accidents or even death. Such systems are typically found in flight control or train control systems, where an error could potentially incur loss of life.

Soft Real-Time (SRT) systems, on the other hand, are not as brittle. An error in a SRT system, while not encouraged or appreciated, will not cause loss of property or life. SRT systems are not as safety-critical as HRT systems, and should not be employed in a safety-critical situation. Examples of SRT systems would be online reservation systems, or streaming multimedia applications where slight occasional delays might cause small inconvenience but will not have serious impact.

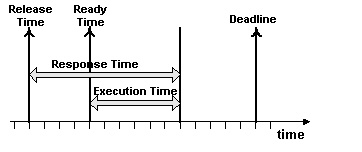

Jobs are the real-time system's building blocks. Each real-time job has certain temporal quantities (Figure 2) associated with them: Release Time, Ready Time, Execution Time, Response Time, and Deadline.

Figure 2 — Temporal Quantities of Each Real-Time Job

The Release Time of a job is when the job becomes available to the system. The Execution Time is the time it takes for a job to be completely processed. The Response Time is the interval between the release time and the completion of the execution. The Ready Time is the earliest time the job can start executing (always greater or equal to the Release Time). The Deadline is the time by which execution must be finished. If execution is not complete by the deadline, the job is late. A job's deadline can be either hard or soft, indicating the job's temporal dependence. As mentioned earlier, a missed hard deadline can have serious consequences for correct system operation. All real time systems have a certain level of jitter. Jitter is a variance on the actual timing of the above times. In a real-time system, jitter should be measurable within a +/– interval so that the system performance can still be guaranteed. For textbook information on real-time systems, refer to [1].

To develop a real-time distributed system, where computers are interconnected, it is vital to employ a network that can provide communication between the various distributed computers in a reliable and timely fashion. Distributed processors running real-time applications must be able to inter-communicate via a real-time protocol, otherwise the temporal quality of their work is lost. Real-Time Communication networks are like any real-time system. They can be hard or soft, depending on the system requirements and their 'jobs' include message transmission, propagation, and reception. There are a number of real-time control networks available and employed in industry, but none have the popularity or bandwidth capabilities of Ethernet. In the following section, we will discuss the demand for a real-time Ethernet solution.

The Demand for Real-Time Ethernet

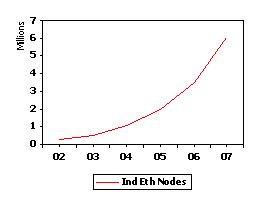

The demand for Ethernet as a real-time control network is increasing (see Figure 3) as manufacturers realise the benefits of employing a single network technology from the boardroom to the plant floor. Decreased product costs coupled with the possibility of overlapping training and maintenance costs for information, field level, control and possibly device networks would greatly reduce the expense to the manufacturers.

Figure 3 — Predicted Sales of Industrial Ethernet Nodes

Ethernet offers many benefits at the real-time control level over existing solutions. As a control network, 10 Gbps Ethernet offers bandwidth that is almost 1000x faster than today's comparable fieldbus networks (such as the 12 kbps of PROFIbus) and can also support real-time communication. Distributed applications in control environments require tight synchronisation so that the delivery of control messages can be guaranteed within defined message cycle times. Typical cycle times for control applications are listed in Table 1. Traditional Ethernet and fieldbus systems are not capable of meeting cycle time requirements below a few milliseconds, but the emerging real-time Industrial Ethernet solutions allow cycle times as low as a few microseconds.

| Typical Cycle Times for Control Applications |

| Control Application |

Typical Cycle Time |

| Low speed sensors (e.g. temp. pressure) |

Tens of milliseconds |

| Drive control systems |

Milliseconds |

| Motion control (e.g. robotics) |

Hundreds of microseconds |

| Precision motion control |

Tens of microseconds |

| High speed devices |

Microseconds |

| Electronic ranging (e.g. fault detection) |

Hundreds of nanoseconds |

Table 1 — Typical Cycle Times for Control Applications

Along with the increased bandwidth and tight synchronisation, real-time Ethernet gives manufacturers the security of using a physical and data-link layer technology that has been standardised by both the IEEE and the ISO. Ethernet can provide reduced complexity with all the attributes required of a field, control or device network - in operations having up to 30 different networks installed at this level [2]. Furthermore, Ethernet devices can also support TCP/IP stacks so that Ethernet can easily gate to the Internet. This feature is attractive to users since it allows remote diagnostics, control, and observation of their plant network from any Internet-connected device around the world with a license-free web browser. Although Ethernet does introduce overhead through its minimum message data size (46 bytes), which is large in comparison to existing control network standards, its increased bandwidth, standardisation and integration with existing plant technology should generate good reasons to consider Ethernet as a control network solution.

Ethernet and CSMA/CD

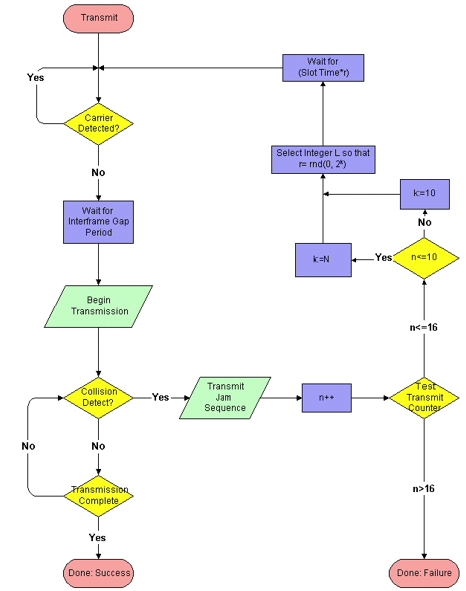

Ethernet, as defined in IEEE 802.3, is unsuitable for strict real-time industrial applications because its communication is non-deterministic. This is due to the definition of the network's media access control (MAC) protocol, which is based on Carrier Sense Multiple Access/ Collision Detection (CSMA/CD), see Figure 4. The implementation described in the standard uses a truncated binary exponential backoff algorithm.

Figure 4 — IEEE 802.3 CSMA/CD with Truncated Binary Exponential Algorithm Flow Chart

With CSMA/CD, each node can detect if another node is transmitting on the medium (Carrier Sense). When a node's Carrier Sense is on, it will defer transmission until it determines that the medium is free. If two nodes transmit simultaneously (Multiple Access), the network experiences a collision and all frames are destroyed. Nodes can detect collisions (Collision Detection) by monitoring the collisionDetect signal provided by the physical layer. When a collision occurs, the node transmits a jam sequence.

When a node begins transmission on the medium there is a certain time interval, called the Collision Window, during which a collision can occur. This window is large enough to allow the signal to propagate around the entire network/segment. When this time window is over, all (functioning) nodes should have their Carrier Sense on, and so would not attempt to commence transmission.

When a collision occurs, the truncated binary exponential backoff algorithm is employed at each 'colliding' node. The algorithm works as follows:

Initially: n:=0, k:=0, r:=0.

When a collision occurs, the node enters the algorithm:

- It increments n, the Transmit Counter, which counts the number of sequential collisions experienced by a node.

- If n > 16, (16 unsuccessful successive transmission attempts), transmission fails and the higher layers should be informed.

- If n <= 16, select a number from the set k = min(n, 10) (Truncation).

- A random number, r, is selected from the set (0,1,2,4...2 k) (Exponential and Binary).

- The node then waits r x slot_time before recommencing a transmission attempt.

One advantage of this backoff algorithm is that it controls the medium load. If the medium is heavily loaded, the likelihood of collisions increases, and the algorithm increases the interval from which the random delay time is chosen. This should lighten the load and reduce further collisions.

Ethernet's CSMA/CD with truncated exponential binary algorithm introduces the possibility of complete transmission failure as well as the possibility of a random transmission time, hence IEEE 802.3's non-determinism and unsuitability for real-time communications — especially on heavily-loaded networks. Re-definition of the media access protocol would solve the problem but would leave the new nodes unable to interoperate with legacy Ethernet nodes.

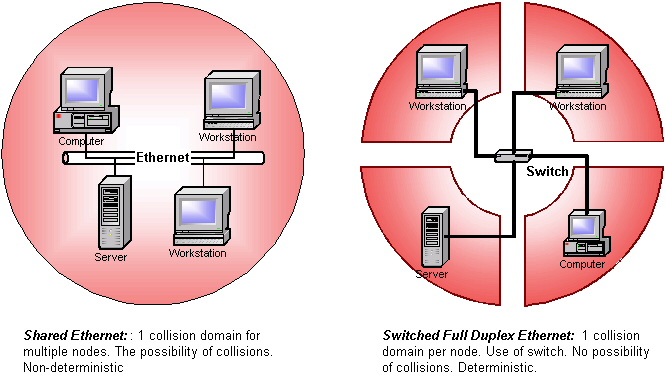

Ethernet is non-deterministic only if collisions can occur. To implement a completely deterministic Ethernet, it is necessary to avoid all collisions. A collision domain is a CSMA/CD segment where simultaneous transmissions can result in a collision. The probability of collision increases with the number of nodes transmitting on a single collision domain. Completely avoiding collisions in the Ethernet network, therefore, gives a network with a relatively large bandwidth and popularity an opportunity to be developed for the real-time domain. The most common way of implementing a collision-free Ethernet is through the use of hardware.

A solution where two or more nodes compete for medium access to a network segment is called Shared Ethernet.

Full & Half Duplex Ethernet

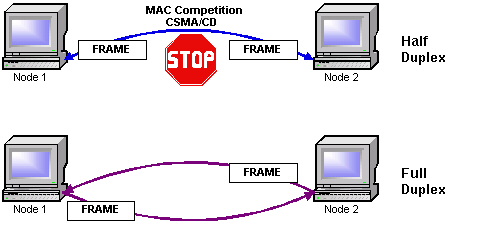

When Ethernet was standardised in 1985, all communication was half duplex. Half duplex communication restricts a node to either transmit or receive at a time but not perform both actions concurrently.

Nodes sharing a half duplex connection are operating in the same collision domain. This means that these nodes will compete for bus access, and their frames have the potential to collide with other frames on the network. Unless access to the bus is controlled at a higher level and highly synchronised across all the nodes co-existing on the collision domain, collisions can occur and real-time communication is not guaranteed.

Full duplex communication was standardised for Ethernet in the 1998 edition of 802.3 as IEEE 802.3x. With full duplex, a node can transmit and receive simultaneously. A maximum of two nodes can be connected on a single full duplex link. Typically this would be a node-to-switch or switch-to-switch configuration. (The set-up of Figure 5 is for illustration purposes, and unlikely to be implemented in a practical form). Theoretically, employing full duplex links can double the available network bandwidth taking it from 10 Mbps or 100 Mbps to 20 Mbps or 200 Mbps respectively, but in practice it is limited by the internal processing capability of each node. Full duplex communication provides every network node with a unique collision domain. This operation completely avoids collisions and does not even implement the traditional Ethernet CSMA/CD protocol.

Figure 5 — Half-Duplex vs. Full-Duplex

Since full duplex links can host a maximum of two nodes per link, such technology is not viable as an industrial real-time solution without the use of fast, intelligent switches capable of connecting links/segments as a network with single collision domains for each node — i.e. Switched Ethernet.

Full Duplex, Switched Ethernet

With shared Ethernet, nodes compete for access to the media in their shared collision domains. The use of a destructive non-deterministic bus access scheme, such as CSMA/CD, makes shared Ethernet unviable as a real-time network solution. The most popular method of collision-avoidance, which produces a deterministic Ethernet, is to introduce single collision domains for each node because this guarantees the node exclusive use of the medium, thereby eliminating access contention. This is achieved by implementing full duplex links as well as hardware devices such as switches and bridges. These devices have the ability to isolate collision domains through the segmentation of the network because each device port is configured as a single collision domain, see Figure 6. Although, both switches and bridges can provide this segmentation, switches are more flexible because they can cater for more segments.

Switches

Switches are data-link layer hardware devices that enable the creation of single-collision domains through network segmentation. While a bridge operates like a switch, it only contains two ports. In contrast, switches contain more than two ports, where each port is connected to a segment (collision domain). Switches can operate in half duplex or full duplex mode. When full duplex switches are used along with full duplex capable nodes, there will be no collisions on any segment. Today's switches are more intelligent and faster than previously and with careful design and implementation could be used to achieve a hard real-time communication network using IEEE 802.3.

Figure 6 — Switched Full Duplex vs. Shared Ethernet

Although switches are data-link layer devices, today's switches are also capable of performing switching functions based on data from layers 3 and 4. Layer 3 switches can operate on information provided by IP — such as IP version, source/destination address or type of service. Layer 4 devices can switch depending on the source/destination port or even information from the higher-level application.

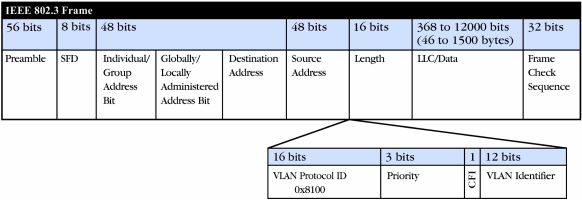

Further refinements to the IEEE 802 standards, specifically for switch operations, are 802.1p and 802.1Q. IEEE 802.1p, which was incorporated into the IEEE 802.1D [3] standard brings Quality of Service (QoS) to the MAC level and also defines how these switches should deal with prioritisation - priority determination, queue management etc. This is achieved through the introduction of a 3-bit priority field into the MAC header, which gives 8 (0–7) different priority levels decoded and upheld by switches or hubs. As defined, 802.1p can support priorities on topologies that are compatible with its prioritisation service, but to implement prioritisation on Ethernet, which does not include a prioritisation field in its frame format, it uses 802.1Q.

IEEE 802.1Q [4] defines an architecture for virtual bridged LANs, the services provided in virtual bridged LANs, and the protocols and algorithms involved in the provision of those services. 802.1Q allows Ethernet frames to support VLANs (Virtual Local Area Networks) limiting broadcast domains and thereby reducing broadcast traffic on the entire LAN. This is achieved by the insertion of 4 bytes between the source address field and the length/type field in the Ethernet frame's header, which among other identifiers, includes the identifier of the originating VLAN. Also included is a 3-bit prioritisation field required for 802.1p to implement prioritisation on Ethernet, see Figure 7.

Figure 7 — Extended Frame Format for IEEE 802.1Q

For a real-time Industrial Ethernet application, an 802.1p/Q implementation has certain advantages: it introduces standardised prioritisation on Ethernet, allowing control engineers to implement up to eight different user-defined priority levels for their traffic. But these standards also have drawbacks including the extra hardware costs associated with the increased Ethernet frame length (1522 bytes), which introduces compatibility issues with legacy Ethernet networks. A real-time implementation using 802.1 p/Q would require full duplex, switched Ethernet. IEEE 802.1p/Q are acceptable for certain applications of real-time Ethernet in industry when switch 'through' time is predictable and an overload situation will not result in hard deadlines being missed.

Although switches can certainly provide real-time deterministic communication on Ethernet and are the backbone of the Industrial Ethernet solutions available today, they have drawbacks. They are costly — a major influence on cost-conscious industries. They are powered devices capable of failure (an influential major factor for hard real-time control operations). And sometimes the operational predictability is not guaranteed by the manufacturer. A study on switches for real-time applications is available at [5].

TCP/UDP/IP for Real-Time Ethernet

With industrial Ethernet, the trend is to define an application layer environment along with the TCP/IP protocol, to realise an industrial automation networking solution. Although some real-time Ethernet solutions e.g. EtherNet/IP perform all their communication, real-time included, through the TCP/UDP/IP stack, most solutions although they provide compatibility with TCP/IP, do not employ this protocol for real-time communication. In a system like EtherNet/IP, TCP is used for initialisation and configuration (explicit) messages while UDP, with its reduced overhead, is used for real-time I/O (implicit messaging).

Typically, for real-time Industrial Ethernet applications it is sufficient that the solution be compatible with TCP/IP and the protocol suite is bypassed for all real-time communication. The ability of a real-time Ethernet solution to intercommunicate with an office-based system is paramount to achieve the Ethernet-technology plant of the future.

Conclusion

This module has covered a broad introduction to Ethernet for real-time. It has described concepts that will be developed upon in the follow-up module: Real-Time Ethernet II. The follow-up module will provide detail on existing real-time Ethernet solutions such as PROFInet V3, ETHERNET Powerlink and EtherNet/IP along with an important supporting technology for clock synchronisation: IEEE 1588.

References

[1] Liu, J. Real-time Systems; Prentice Hall Inc., 2000; ISBN: 0130996513.

[2] Crockett, N. "Industrial Ethernet is ready to revolutionise the factory — Connecting the factory floor", Manufacturing Engineer, Volume: 82, Issue: 3, June 2003, pages 41–44.

[3] ISO/IEC 15802-3: 1998, ANSI/IEEE Std 802.1D, 1998 Edition. Information technology — telecommunications and information exchange between systems — local and metropolitan area networks — common specifications. Part 3: Media Access Control (MAC) bridges.

[4] IEEE Std 802.1Q-1998 IEEE standards for local and metropolitan area networks Virtual bridged local area networks.

[5] Georges, J.-P.; Rondeau, E.; Divoux, T "Evaluation of switched Ethernet in an industrial context by using the Network Calculus", Factory Communication Systems, 2002. 4th IEEE International Workshop on 28–30 Aug. 2002, pages 19–26.